Focusing on some of the data behind Current/Future State of Higher Education (CFHE12) has been very stimulating and has got me thinking about the generation and flow of data on many levels. Having recently produced some data visualisations of ds106 for OpenEd12 it was reassuring that one of the first questions was “is the #ds106 data openly licensed?”. Reassuring because it is good to see that people are thinking beyond open content and open learning experiences and thinking about open data as well. So what data is available around CFHE12? In this post I look at data feeds available from CFHE12, see what we can get and suggest some alternative ways of getting the data and pulling it in to other services for analysis. Finally I highlight the issues with collecting participant feeds filtered by tag/category/label.

Working down some of the menu options on the CFHE12 home page lets see what we’ve got and how easy it is to digest.

Newsletter Archives

This page contains a link to each ‘Daily Newsletter’ sent out by the gRSShopper (Stephen Downes’ RSS/content collection, remix and distribution system). I’m not familiar with how/if the Daily data can be exported by an officially API, but with tools like Google Spreadsheet, Yahoo Pipes and others it’s possible to extract a link to each edition of the Daily using some basic screen scraping techniques like using XPath. So in this sheet in cell A2 I can get a list of archive pages using =ImportXML("http://edfuture.mooc.ca/cgi-bin/archive.cgi?page=newsletter.htm","//a/@href"). Using the ImportXML using the resulting list of pages it’s possible to get a comma separated list of all the posts in each Daily (column B).

The formula in column B includes a QUERY statement and is perhaps worthy of a blog post in it’s own right. Here it is: =IF(ISBLANK(A2),"",JOIN(",",QUERY(ImportXML(A2,"//a[.='Link']/@href"),"Select * WHERE not(Col1 contains('twitter.com'))"))). In brief the pseudocode is: if the cell is blank return nothing otherwise comma join the array of results from importXML for links which use he text ‘Link’ where it doesn’t contain a link to twitter.com. Note: there is a limitation of 50 importXML formula per spreadsheet so I’ll either have to flatten the data or switch to Yahoo Pipes

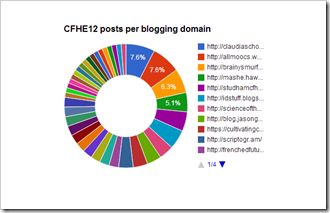

The resulting data is of limited use but it’s useful to see how many posts have been published via gRSShopper and by who:

It’s at this point tat we start to get an sign that the data isn’t entirely clean. For example, in cell A153 I’ve queried the Daily Newsletter posts from this blog and I get four results shown below:

- https://hawksey.info/blog/2012/10/filtering-a-twitter-hashtag-for-community-questions-and-responses-situational-awareness/

- https://hawksey.info/blog/2012/10/cfhe12-week-1-analysis-twitter-isnt-so-massive/

- https://hawksey.info/blog/2012/10/cfhe12-week-1-analysis-twitter-isnt-so-massive/

- https://hawksey.info/blog/2012/10/summary-of-social-monitoring-tools-and-recipes/

You can see there is a double post and actually, at time of writing I’ve only made two posts tagged with cfhe12. Moving on, but coming back to this point later, lets now look at the feed options.

Feeds

The course has feed options for: Announcements RSS; Blog Posts RSS; and OPML List of Feeds. I didn’t have much luck with any of these. The RSS feeds have data but aren’t valid RSS and the OPML (a file format which can be used for bundling lots of blog feeds together) only had 16 items and was also not valid (I should really have a peak at the source for gRSShopper and make suggestions for fixes, but time and lack of Perl knowledge has prevented that so far). I did attempt some custom Apps Script to capture the Blog Posts RSS to this sheet, but I’m not convinced it’s working properly, in part because the source feed is not valid. There are other feeds not listed on the home page I might dig into like the Diigo CFHE12 Group which I’m collecting data from in a Google Spreadsheet using this IFTTT recipe.

Generating an OPML and Blog Post RSS from ‘View List of Blogs’ data

OPML

All is not lost. gRSShopper also generates a page of blogs it’s aggregating. With a bit more XPath magic (=ImportXML("http://edfuture.mooc.ca/feeds.htm","//a[.='XML']/@href")) I can scrape the XML links for each registered blog into this sheet. Using the Spreadsheet -> OPML Generator I get this OPML file of CFHE12 blogs (because the spreadsheet and OPML generator sit in the cloud this link will automatically update as blogs are added or removed from the Participant Feeds page). For more details on this recipe see Generating an OPML RSS bundle from a page of links using Google Spreadsheets.

Blog posts RSS

Earlier I highlighted a possible issue with posts being included in the Daily Newsletter. This is because it can be very tricky to get an RSS feed for a particular tag/category/label from someone’s blog. You only need to look at Jim Groom’s post on Tag Feeds for a variety of Blogging Platforms to get an idea of the variation between platforms. It’s worth underlying the issue here, each blogging platform has a different way of getting a filtered RSS feed for a specific tag/category/label. Also, in certain cases it’s not possible to get a filtered RSS feed. When a student registers a feed for an online course it can be difficult for them to identify their own blogs RSS feed, let alone a filtered feed.

Before you go off thinking Yahoo Pipes is the answer for all your open course RSS aggregation there are some big questions over reliability and how this solution would scale. It’s also interesting to note all the error messages because of bad source feeds:

warning Error fetching http://edfuture.mooc.ca/feed://bigedanalytics.blogspot.com/feeds/posts/default?alt=rss. Response: Not Found (404)

warning Error fetching http://florianmeyer.blogspot.com. Response: OK (200). Error: Invalid XML document. Root cause: org.xml.sax.SAXParseException; lineNumber: 1483; columnNumber: 5; The element type “meta” must be terminated by the matching end-tag ” “.

warning Error fetching http://edfuture.mooc.ca/cain.blogspot.com. Response: Not Found (404)

warning Error fetching http://larrylugo.blogspot.com/feeds/comments/default?alt=rss/-/CFHE12. Response: Bad Request (400)

warning Error fetching http://edfuture.mooc.ca/taiarnold.wordpress.com/feed. Response: Not Found (404)

warning Error fetching http://futuristiceducation.wordpress.com/wp-admin/index.php?page=my-blogs. Response: OK (200). Error: Invalid XML document. Root cause: org.xml.sax.SAXParseException; lineNumber: 5; columnNumber: 37; The entity “rsaquo” was referenced, but not declared.

warning Error fetching http://edtech.vccs.edu/feed/: Results may be truncated because the run exceeded the allowed max response timeout of 30000ms.

Rather than trying to work with messy data one strategy would be to start with a better data source. I have a couple of thoughts on this I’ve shared in Sketch of a cMOOC registration system.

Summary

So what have I shown? Data is messy. Data use often leads to data validation. Making any data available means someone else might be able to do something useful with it. In the context cMOOCs getting a filtered feed of content isn’t easy.

Something I haven’t touched upon is how the data is licensed. There are lots of issues with embedding license information in data files. For example, I’m sure technically the OPML file I generated should be licensed CC-BY-NC-SA CFHE12 because this is the license of the source data? I’m going to skip over this point but welcome your comments (you might also want to check the Open Data Licensing Animation from the OERIPR Support Project).

[I’ve also quietly ignored getting data from the course discussion forums and Twitter archive (the later is here)]

PS Looking forward to next weeks CFHE12 topic Big data and Analytics 😉

CFHE12 Week 4 Analysis: Blog post comments (notes on comment aggregation for cMOOCs) JISC CETIS MASHe

[…] OPML bundle of feeds extracted in week 2 was added to an installation of FeedWordPress. This has been collecting posts from 71 feeds […]