Yesterday I got stuck into the first week of the Coursera course on Computing for Data Analysis. The course is about:

learning the fundamental computing skills necessary for effective data analysis. You will learn to program in R and to use R for reading data, writing functions, making informative graphs, and applying modern statistical methods.

You might be asking given that I’ve already dabbled in R why am I taking an introductory course? As I sat watching the lectures on my own (if anyone wants to do a Google Hangout and watch next weeks lectures together let me know) I reminisced about how I learned to swim. The basic story is 6 year old boy is staying a posh hotel for first time, nags parents to take him to the swimming pool, when they get there gets changed runs off and jumps in at the deep end. When I eventually came back to the surface I assumed the doggy paddle and was ‘swimming’ … well ‘swimming’ in the sense that I wasn’t drowning.

The method of ‘throwing myself in’ is replicated throughout my life, particularly when it comes to learning. So whilst I’ve already thrown myself into R I can survive but only just and what I’ve produced is mainly as a result of trying not to drown. This revelation was particularly clear when learning about subsetting (reshaping data)

early #compdata win, understanding subsetting in R particularly logical, partial matching & removing missing data youtu.be/hWbgqzsQJF0

— Martin Hawksey (@mhawksey) September 27, 2012

I’ve got an example where I’ve been practicing my subsetting skills with NodeXL data later in this post, but first some quick reflections about my experience on the course so far.

MOOCing about in Coursera

So hopefully you’ve already got the picture that I’m a fairly independent learner so I haven’t bothered with the built-in discussion boards, instead opting to view the lectures (I’m finding x1.5 speed suits me) and take this weeks quiz. The assignment due for week 2 is already announced and people are racing ahead to get it done (which appears to have forced the early release of next weeks content).

Something apparent to me in the Coursera site is the lack of motivational cues. I’ve got no idea how I’m doing in relationship with my fellow 40,000 other students in terms of watching the lectures or in this weeks quiz. Trying to get my bearings in using the #compdata Twitter hashtag hasn’t been that successful because in the last 7 days there have only been 65 people using or mentioned with the tag (and of the 64 tweets 29 were ‘I just signed up for Computing for Data Analysis #compdata …’)

Things are looking up on the Twitter front though as some recent flares have gone up:

@xmacex Now when @mhawksey is here in #compdata you know you’re in the right place! JISC (CETIS) and he: #FF#rstats

— Tuija Sonkkila (@ttso) September 27, 2012

and also @ @hywelm has made himself known 😉

Will there be much community building in the remaining 3 weeks?

Mucking about with NodeXL and R

In the section above I’ve mentioned various Twitter stats. To practice this week’s main compdata topics of reading data and subsetting I thought I’d have a go at getting the answers from a dataset generated in NodeXL (I could have got them straight from NodeXL but where is the fun in that ;).

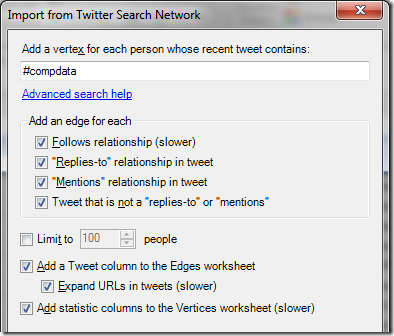

Step 1 was to fire up NodeXL and import a Twitter Search for #compdata with all of the boxes ticked except Limit to… .

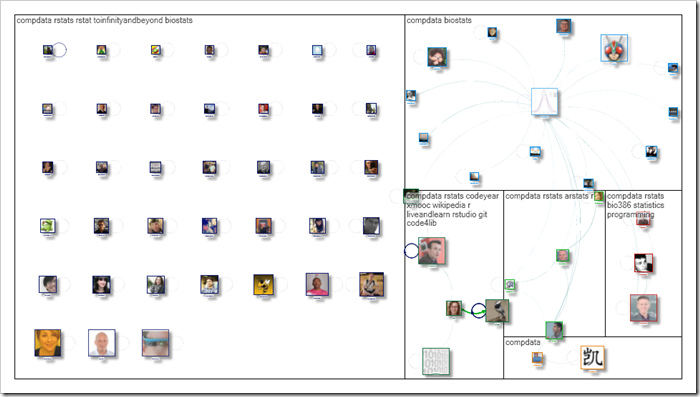

As a small aside I grabbed the the NodeXL Options Used to Create the Graph used in this MOOC search by Marc Smith, hit the automate button and came up with the graph shown below (look at those isolates <sigh>):

To let other people play along I then uploaded the NodeXL spreadsheet file in .xlsx to Google Docs making sure the ‘Convert documents …’ was checked and here it is as a Google Spreadsheet. By using File > Publish to the web… I can get links for .csv versions of the sheets.

In R I wrote the following script:

If you run the script you should see various answers pop out. As I’m learning this if anyone would like to suggest improvements please do. My plan is to keep adding to the data and extending the script as the weeks go buy to practices my skills and see what other answers I can find