In May 2009 JISC CETIS announced the winners of the OER Technical Mini-Projects. These projects were designed:

to explore specific technical issues that have been identified by the community during CETIS events such as #cetisrow and #cetiswmd and which have arisen from the JISC / HEA OER Programmes

JISC CETIS OER Technical Mini Projects Call

Source : http://blogs.cetis.ac.uk/philb/2011/03/02/jisc-cetis-oer-technical-mini-projects-call/

License: http://creativecommons.org/licenses/by/3.0/

Author: Phil Barker, JISC CETIS

One of the successfully funded projects was CaPRéT – Cut and PAste reuse and Tracking from Brandon Muramatsu, MIT OEIT and Justin Ball and Joel Duffin, Tatemae. I’ve already touched upon OER tracking in day 24 and day 30 briefly looking at social shares of OER repository records. Whilst projects like the Learning Registry have the potential to help it still early days and tracking still seems to be an afterthought, which has been picked up in various technical briefings. CaPReT tries to address part of this problem, as stated in introduction to their final report:

Teachers and students cut and paste text from OER sites all the time—usually that’s where the story ends. The OER site doesn’t know what text was cut, nor how it might be used. Enter CaPRéT: Cut and Paste Reuse Tracking. OER sites that are CaPRéT-enabled can now better understand how their content is being used.

When a user cuts and pastes text from a CaPRéT-enabled site:

- The user gets the text as originally cut, and if their application supports the pasted text will also automatically include attribution and licensing information.

- The OER site can also track what text was cut, allowing them to better understand how users are using their site.

The code and other resources can be found on their site. You can also read Phil Barker’s (JISC CETIS) experience testing CaPReT and feedback and comments about the project on the OER-DISCUSS list.

One of the great things about CaPReT is the activity data is available for anyone to download (or as summaries Who’s using CaPRéT right now? | CaPRéT use in the last hour, day and week | CaPRéT use by day).

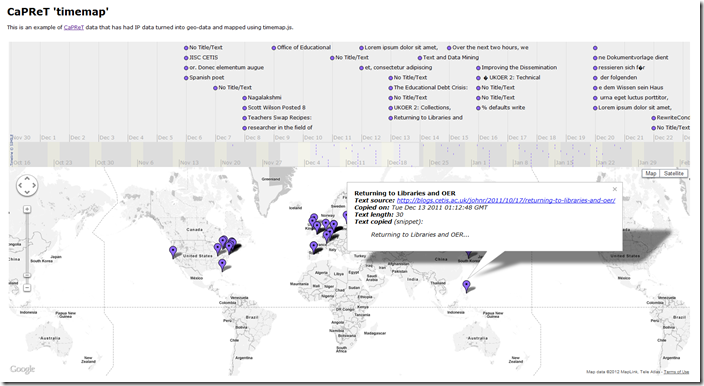

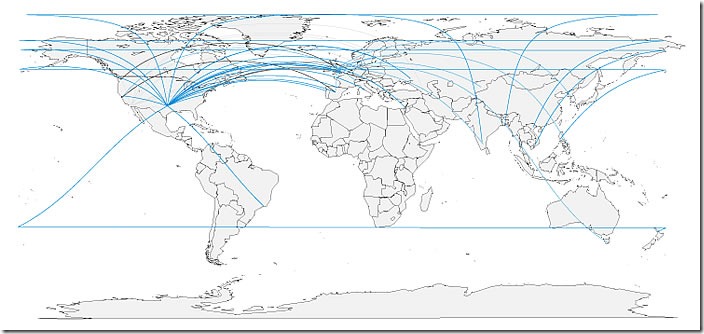

One of the challenges set to me by Phil Barker was to see what I could do with the CaPReT data. Here’s what I’ve come up with. First a map of CaPReT (great circles) usage plotting source website and where in the world some text was copied from (click on image for full scale):

Both these examples rely on the same refined data source rendered in different ways and in this post I’ll tell you how it was done. As always it would be useful to get you feedback as to whether these visualisations are useful, things you’d improve or other ways you might use the recipes.

How was it made – getting geo data

- Copied the CaPReT tabular data into Excel (.csv download didn’t work well for me, columns got mixed up on unescaped commas), and saved as .xls

- .xls imported in Google Refine. The main operation was to convert text source and copier IP/domains to geo data using www.ipinfodb.com. An importable set of routines can be downloaded from this gist [Couple of things to say about this - CaPReT have a realtime map, but I couldn’t see any locations come through – if they are converting IP to geo it would be useful if this was recorded in the tabular results. IP/domain location lookups can also be a bit misleading, for example, my site is hosted in Canada, I’m typing in France and soon I’ll be back to Scotland

- the results were then exported to Excel and duplicates removed based on ‘text copied on’ dates and then uploaded to a Google Spreadsheet

Making CaPReT ‘great circles’ map

Var1 | Freq | |

1 | blogs.cetis.ac.uk | 35 |

2 | capret.mitoeit.org | 8 |

3 | dl.dropbox.com | 2 |

4 | freear.org.uk | 43 |

5 | translate.googleusercontent.com | 1 |

6 | www.carpet-project.net | 4 |

7 | www.icbl.hw.ac.uk | 1 |

8 | www.mura.org | 19 |

This was rendered in RStudio using Nathan Yau’s (Flowing Data) How to map connections with great circles. The R code I used is here. The main differences are reading the data from a Google Spreadsheet and handling the data slightly differently (Who would have thought as.data.frame(table(dataset$domain)) would turn the source spreadsheet into (#stilldiscoveringR) –>

I should also say that there was some post production work done on the map. For some reason some of the ‘great circles’ weren’t so great and wrapped around the map a couple of times. Fortunately these anomalies can easily be removed using Inkscape (and while I was there added a drop shadow.

Making CaPReT ‘timemap’

Whilst having a look around SIMILE based timelines for day 30 I came across the timemap.js project which:

is a Javascript library to help use online maps, including Google, OpenLayers, and Bing, with a SIMILE timeline. The library allows you to load one or more datasets in JSON, KML, or GeoRSS onto both a map and a timeline simultaneously. By default, only items in the visible range of the timeline are displayed on the map.

Using the Basic Example, Google v3 (because it allows custom styling of Google Maps) and Google Spreadsheet Example I was able to format the refined data already uploaded to a Google Spreadsheet and then plug it in as a data source for the visualisation (I did have a problem with reading data from a sheet other than the first one, which I’ve logged as an issue including a possible fix).

A couple of extras I wanted to do with this example is also show and allow the user to filter based on source. There’s also an issue of scalability. Right now the map is rendering 113 entries, if CaPReT were to take off the spreadsheet would suddenly fill up and the visualisation will probably grind to a halt.

[I might be revisiting timemap.js as they have another example for a temporal heatmap, which might be could for showing Jorum UKOER deposits by institution.]

So there you go, two recipes for converting IP data into something else. I can already see myself using both methods in other aspects of the OER Visualisation and other project. And all of this was possible because CaPReT had some open data.

I should also say JISC CETIS have a wiki on Tracking OERs: Technical Approaches to Usage Monitoring for UKOER and Tony also recently posted Licensing and Tracking Online Content – News and OERs.