So I make yet another foray into a world that I know little about, potentially making statements that are factually incorrect or just stupid, but I do so hoping that I can share some of my discoveries. All the data is available for your own analysis and comments are open if you want to put me in my place <-that was my disclaimer

In this post I’m going to have another look at getting sentiment analysis data for a tweet stream and make a comparison between two free APIs (ViralHeat and Text-Processing) with some manually sentiment analysed data. In conclusion the Text-Processing Sentiment API data proved to be more accurate at calculating overall sentiment than ViralHeat, but both had a 18% probability of detecting the right sentiment within a tweet.

Method

Having already looked at Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how that keynote went down I decided to reuse the data obtained from the tweet stream during Donald Clark’s ALT-C 2010 keynote.

ViralHeat

This data was generated my sending individual tweets (n. 561) to the ViralHeat Sentiment API, getting a positive or negative classification combined with a probability factor between 0 and 1 indicating confidence level. The positive/negative classifications were turned into numeric values by converting ‘positive’ to +1 and ‘negative’ to –1 and multiplying by probability. These values were accumulated and graphed to plot how tweet sentiment changed over time.

Text-Processing

Using a slight modification to the Google Apps Script used to collect ViralHeat data the same set of tweets were based to the Text-Processing Sentiment API. The data returned for each tweet consisted of the label ‘pos’ (positive), ‘neg’ (negative) or ‘neutral’ and probability for all of the labels. The label returned was determined by the API using a hierarchical classification whereby neutrality is calculated as a standalone value. If neutrality has a probability greater than 0.5 then the label returned is ‘neutral’. Positive and negative sentiment probability are balanced to add up to 1. To illustrate this below is an example of the data returned:

{

"probability": {

"neg": 0.39680315784838732,

"neutral": 0.28207586364297021,

"pos": 0.60319684215161262

},

"label": "pos"

} Similar to the ViralHeat data positive and negative labels were turned into numeric values by multiplying probability by +1 for positive and –1 for negative labels (neutral labels were ignored n.161). These values were also accumulated

Manual Analysis

The 561 tweets were printed off as a table with no extra data. Each tweet was individually assessed (by me – not trained in social research in anyway, btw) and marked as having positive, negative or neutral sentiment. These values were entered into the spreadsheet and converted to 1, –1 or 0 for positive, negative and neutral. Like the Text-Processing data neutral tweets were ignored (n. 422) leaving 139 data points

Results

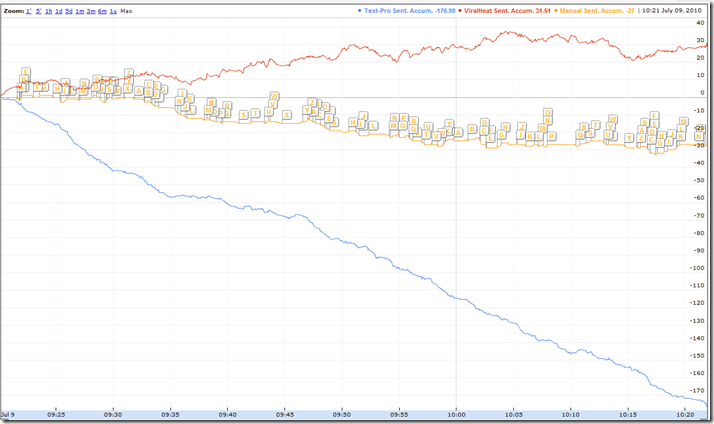

The graph below shows the accumulated sentiment scores for the manual analysis compared to the results from Text-Processing and ViralHeat plotted against the time the tweet was made (09/07/2010 09:20:12 to 09/07/2010 10:21:55) [click to see interactive version – takes a couple of seconds to appear].

The graph shows that the ViralHeat scores display an overall positive trend whilst the manual analysis and Text-Processing scores show an overall negative sentiment in the tweets, perhaps suggesting that the Text-Processing data is more accurate at calculating overall sentiment than ViralHeat with the given data.

As the ViralHeat data doesn’t appear to have hierarchical classification, which indicates if text is neutral, I decided to filter the data and only use results which had a probability factor within the top 10%. This resulted in a subset of ViralHeat data (n.56) which had a probability of greater than 0.987 and Text-Processing (n.40*) with a probability greater than 0.867.

*less because 161 data points were already excluded as they were neutral

The graph which includes only the top 10% of sentiment scores from ViralHeat and Text-Processing is shown below [click to see interactive version – takes a couple of seconds to appear]. The overall effect of this filter is the overall positive and negative sentiment scores for both ViralHeat and Text-Processing are reduced (this is expected as their are less data points).

Whilst it looks like Text-Processing is more accurate at detecting sentiment within a tweet on a tweet-by-tweet basis it’s a different story. When I manual recorded sentiment data for each tweet within the dataset I was able to assign 139 with either positive (n.56) or negative (n.83) sentiment. Text-Processing returned positive (n.81) and negative (n.319) for 400 tweets and ViralHeat returned positive (n.296) and negative (n.265) for 561 of the tweets. When comparing the returned sentiment with the manually processed tweets Text-processing returned 72 (18%) results with matching sentiment and ViralHeat 99 (18%).

Conclusion

Hmm. Sentiment analysis of individual tweets might only be 20% accurate using off the self tools with the data I looked at. This however might be enough if you are looking to filter large volumes of data. Both ViralHeat and Text-Processing have options that could potentially increase the accuracy by for example using a different text classification (it also worth noting that Text-Processing is built using the open source Python Natural Language Toolkit so even more tweaking is possible).

I’m sure more conclusion can be made so why not have a look at the data I’ve captured and leave some notes in the comments 😉

Sentiment Analysis of tweets: Comparison of ViralHeat and Text-Processing Sentiment APIs | Text analytics, text understanding | Scoop.it

[…] Sentiment Analysis of tweets: Comparison of ViralHeat and Text-Processing Sentiment APIs From mashe.hawksey.info – Today, 8:08 AM […]