Did you tune into into Donald Clark’s “Don’t Lecture Me!” keynote at ALT-C 2010 or were you at Joe Dale’s FOTE10 presentation? These presentations have two things in common, both Donald and Joe posted their reflections of a ‘hostile’ twitter backchannel (see Tweckled at ALT! and Facing the backchannel at FOTE10) and I provided a way for them to endlessly relive their experience with a twitter subtitle mashup, wasn’t that nice of me 😉 (see iTitle: Full circle with Twitter subtitle playback in YouTube (ALT-C 2010 Keynotes) and Making ripples in a big pond: Optimising videos with an iTitle Twitter track)

Something I’ve been meaning to do for a while is find a way to quickly (and freely) analysis a twitter stream to identify the audience sentiment. You can pay big bucks for this type of solution but fortunately viralheat have a sentiment API which gives developers 5,000 free calls per day. To use this you push some text to their API and you’ll get back the text mood and probability that the sentiment detected is correct (more info here).

So here is a second-by-second sentiment analysis of Donald and Joe’s presentations, followed by how it was done (including some caveats about the data).

Donald Clark – Don’t Lecture Me! from ALT-C 2010

Open Donald Clark’s ALTC2010 Sentiment Analysis in new window

Joe Dale – Building on firm foundations and keeping you connected in the 21st century. This time it’s personal! FOTE10

Open Joe Dale’s Sentiment Analysis in new window

How it was done

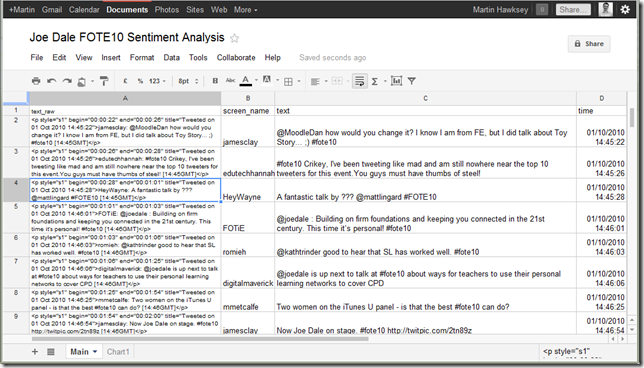

Step 1: formatting the source data

This first part is very specific to the data source I had available. If you already have a spreadsheet of tweets (or other text) you can skip to the next part.

All viralheat needs is chunks of text to analyse. When I originally added the twitter subtitles to the videos I pulled the data from the twitter archive service Twapper Keeper but since March this year the export function has been removed (BTW Twapper Keeper was also recently sold to Hootsuite so I’m sure more changes are on the horizon). I also didn’t get a decent version of my Google Spreadsheet/Twitter Archiver working until February so had to find an alternate data source (To do: integrate sentiment analysis into twitter spreadsheet archiver ;).

So instead I went back to the subtitle files I generated in TT-XML format. Here’s an example line:

<p style="s1" begin="00:00:28" id="p3" end="00:01:01" title="Tweeted on 01 Oct 2010 14:45:28">HeyWayne: A fantastic talk by ??? @mattlingard #FOTE10 [14:45GMT]</p>

The format is some metadata (display times, date), then who tweeted the message, what they said and a GMT timestamp. The bits I’m interested in are the message and the date metadata, but in case I needed it later I also extracted who tweeted the message. Putting each of the <p> tags into a spreadsheet cell it’s easy to extract the parts I want using these formula:

Screen name (in column B)

- =MID(A2,FIND(">",A2)+1,(SEARCH(":",A2,FIND(">",A2))-FIND(">",A2)-1))

Tweet (in column C)

- =MID(A2,FIND(">",A2)+3+LEN(B2),LEN(A2)-FIND(">",A2)-LEN(B2)-16)

Date/time (in column D)

- =VALUE(MID(A2,FIND("Tweeted on",A2)+11,20))

You can find more information about the cell formula used elsewhere but briefly:

- MID extracts some subtext based on start point and length;

- FIND finds the first occurrence of some text in text

- SEARCH is used to find the first occurrence of some text after a specific point (in this case I knew ‘:’ marked the end of who tweeted the message but if I used FIND it would have returned the position of the : in begin=

- LEN is the number of characters in a cell

- VALUE is used to convert a text string into another format like a number or in this case a date/time

This gives me a spreadsheet which looks like this:

Step 2: Using Google Apps Script to get the sentiment from viralheat

Google Apps Script is great for automating repetitive tasks. So in the Tools > Script editor… you can drop in the following snippet of code which loops through the text cells and gets a mood and probability from viralheat:

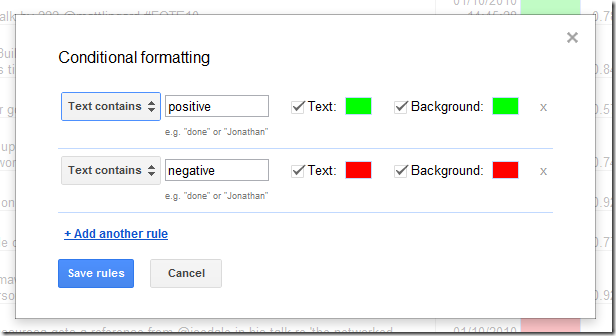

Step 3: Making the data more readable

If the script worked you should have some extra columns

with the returned mood (positive or negative) and a probability factor. To make the data more readable I did a couple of things. For the mood I used conditional formatting to turn the cell green for positve, red for negative. To do this select the column with the mood vaules and then open Format > Conditional formatting and add these rules:

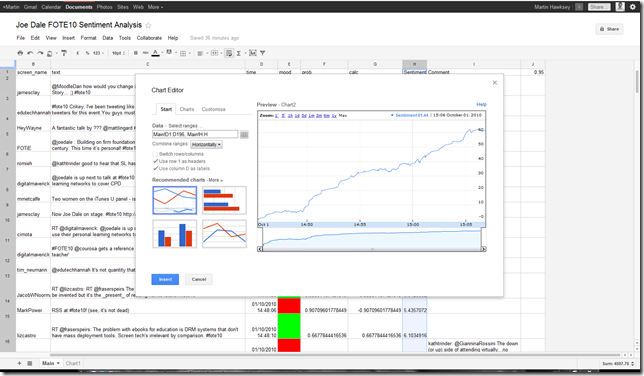

In the examples above you’ll see I graphed a sentiment rating over time. To do this I converted ‘negative’ and ‘positive’ into the values –1 and 1 using the formula where the returned mood is in column E:

- =IF(E2="positive",1,-1)

I also wanted to factor in the probability by multiplying the value by the probability by adding:

- =IF(E2="positive",1,-1)*F2 (where the probability factor is in column F)

These values were then accumulated over time.

Using the time and accumulated sentiment columns you can then Insert > Chart, and if not already suggested, use the Trend > Time line chart.

One last trick I did, which I’ll let you explore in the published spreadsheets I’ll link to shortly, is extract and display certain tweets which are above a threshold probability.

As promised here are the spreadsheets for (you can reuse by File > Make a copy):

Some quick notes about the data

A couple of things to bear in mind when looking at this data

Noise – All of the analysed tweets don’t necessarily relate to the talk so if someone didn’t like the coffee at the break or someone is tweeting about the previous talk they liked that will effect the results

Quoting presenter – Donald’s talk was designed to get the audience to question the value of lectures so if he made negative statements about that particular format that were then quoted by someone in the audience it would be recorded as negative sentiment.

Sometimes it’s just wrong – and let not forget there maybe times when viralheat just get it wrong (there is a training api 😉

There is probably more to say like is there a way to link the playback with a portion of the sentiment chart or should I explore a way to use Google Apps Script and viralheat to automatically notify conference organisers of the good and bad. But that’s why I have a comment box 😉

Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how that keynote went down « MASHe | Google Apps Script | Scoop.it

[…] Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how tha… […]

Donald Clark

This is a very neat tool. I was asked whether I’d be happy with a twitter annotated version of the talk on YouTube and readily agreed. With over 13,000 views it made my point; that if you want to lecture, the scalable distribution of recorded lectures is essential. The reaction I’ve had from these viewers has been the converse of those who tweckled, showing an increasing lack of realism among HE folk, regarding the way they teach. As Peter Thiel says, to even question the dubious practices in HE is like saying that Santa Claus doesn’t exist – a sure sign that they’re in a bubble.

Martin Hawksey

Hi Donald – increasing lack of realism, possibly, but there is also an increasing group who know and do something different. A couple of years ago I was fortunate to briefly work with Jim Boyle at the University of Strathclyde. Jim recognised long ago (over a decade) that passive learning wasn’t, and never was, appropriate for learning and in searching for a better way amongst other things adopted Eric Mazur’s Peer Instruction techniques. When I was working with Jim he was still looking for new ways to improve the way students learned and if you haven’t already seen I’d whole heartily recommend you watch his ESTICT keynote Truth, Lies and Voting Systems which in part looks at the issues using PowerPoint in teaching and learning.

But why isn’t HE full of Jim’s? Why aren’t all educators looking to educate with the tools at their disposal? I believe part of the problem is ‘expectations’, and not just the expectation of academics that they need to stand up in front of a room and talk for 50 minutes. No the problem is bigger than that. There is the expectation by your colleagues and head of department that you as an academic will stand up for 50 minutes twice a week and lecture your class, there is the expectation by the institution that you as an academic will stand up for 50 minutes twice a week and lecture your class, there is the expectation by the professional body who accredit your course and have supplied you an outline curriculum that you will stand up for 50 minutes twice a week, and unfortunately students themselves have an expectation of university life which includes you as an academic standing up for 50 minutes twice a week.

There will always be individuals within an institution using good teaching practice but to turn these from the minority to the majority needs the institution to have and sell a different expectation of teaching and learning. The OU already do this, Aalborg University and their institution wide PBL approach do this and University of Lincoln’s ‘Student as producer’ has the potential to do it and there will be others but not enough.

That’s what I think anyway

Martin

PS Here’s a variation of the ALT-C sentiment graph that includes negative comments with a probability greater than 0.9

Saqib Ali

This is very cool, Martin. I am harvesting the code to analyze blogpost about our new product releases!!! 🙂

Thanks for sharing this.

A Couple More Webrhythm Identifying Tools « OUseful.Info, the blog…

[…] trend analysis, is sentiment analysis. Martin has started tinkering around this already: Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how that… LD_AddCustomAttr("AdOpt", "1"); LD_AddCustomAttr("Origin", "other"); […]

Updates to Twitter Archiving Google Spreadsheet v2.3 [TAGS] « MASHe

[…] are also some easter eggs in the code. The main ones are the code I use to grab ViralHeat sentiment analysis of tweets and some code to generate data for my Protovis gadget. Share this:window.___gcfg = […]

TAGSExplorer: Interactively visualising Twitter conversations archived in a Google Spreadsheet « MASHe

[…] #studentexp. I’d captured the #studentexp tagged tweets using my TAGS spreadsheet, used my recipe get sentiment analysis from ViralHeat and imported the data into NodeXL to start exploring some of the tweets and conversations from the […]

Mat Morrison

I’m bearish about sentiment analysis in general, and Twitter Sentiment in particular; but I’m v. grateful you’ve shared this; and I love the methodology.

I’ve done some trivial work on the paradox of sentiment scoring (best summarised as: if sentiment isn’t a proxy for some quantitative variable like “opening weekend box-office”, “sales” or “share price”, then who’s judging accuracy?)

I’ve some hope that longer corpora of combined tweets (i.e. longer than the 140 byte-length units you seem to be measuring here) might one day prove a bit useful.

What do you think? Are you convinced by the data? Would a different API have given you radically different data?

Martin Hawksey

Hi Mat, yes I’m not entirely convinced by the data. One of the things I wanted to do was go through the all the data marking it up with my own interpretation of the the tweet sentiment and then compare it with the data from viralheat and just see if it was even in the right ball park.

The good thing about the data returned is it includes a probability factor. I used tweets with a high probability of having the correct sentiment detection to annotate the chart. So one way of looking at it is the recipe provides a way to sift large volumes of data for some tweets that are very likely to be positive or negative.

Not aware of any other free APIsjust found this API that uses the Python NLTK but Tony Hirst did point me to this python library but I can’t remember which one (might be the NLTK – good thing about NLTK is it includes neutral responses, must compare it to viral heat).Martin

Martin Hawksey

I’ve compared viralheat with text-processing against a collection of tweets I manually analysed. It might have been more luck than anything else but text-processing was able to get the overall sentiment of just over 500 tweets. On a tweet-by-tweet basis it (and viralheat) only managed 20% accuracy. More here

Martin

Sentiment Analysis of tweets: Comparison of ViralHeat and Text-Processing Sentiment API – MASHe

[…] detecting the right sentiment within a tweet. MethodHaving already looked at Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how that… I decided to reuse the data obtained from the tweet stream during Donald Clark’s ALT-C 2010 […]

Visualising the #ili2011 Twitter archive JISC CETIS MASHe

[…] conference I suspect you’ll find a lot of positive commentsI can confirm by using my Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how that… recipe that there were a lot of positive comments […]

Viralheat Social Sentiment Analysis Chrome Plugin Now Supports Facebook! – Viralheat Social Media Strategy Blog

[…] Tracking a conference keynote: Martin Hawksey (@mhawksey) cleverly combined the use of the sentiment API and a spreadsheet of conference tweets to find out how two separate keynotes went and stacked up against each other. […]

Live Twitter data from FOTE #fote11 [Top Tweeters, Sentiment, Hashtag Network Diagram, Conversation Diagram] JISC CETIS MASHe

[…] Spreadsheets as a data source to analyse extended Twitter conversations in NodeXL (and Gephi) and Using the Viralheat Sentiment API and a Google Spreadsheet of conference tweets to find out how that… I was able to import sentiment data for each tweet (edge). I then accumulated the sentiment […]