I was delighted to have the opportunity to speak at DevFest London about Google Analytics. One of the huge benefits of being on the Google Developers Experts program is the opportunity to meet experts from other products. In particular, I’ve greatly appreciated connecting with the Google Analytics GDEs. Through meeting them, seeing them talk and following what they do has greatly expanded my own expertise in GA. For my talk at DevFest my aim was to share some of what I’ve learned and to get people thinking about Google Analytics beyond websites and pageviews allowing a glimpse into the opportunities for easy data collection and actionable insight. The talk was entitled ‘The Google Analytics of Things’ and is one I’ve give before but tweaked for the Tech Entrepreneurship strand I was talking in. (slides from my talk can be viewed here).

Using face detection with Google Analytics

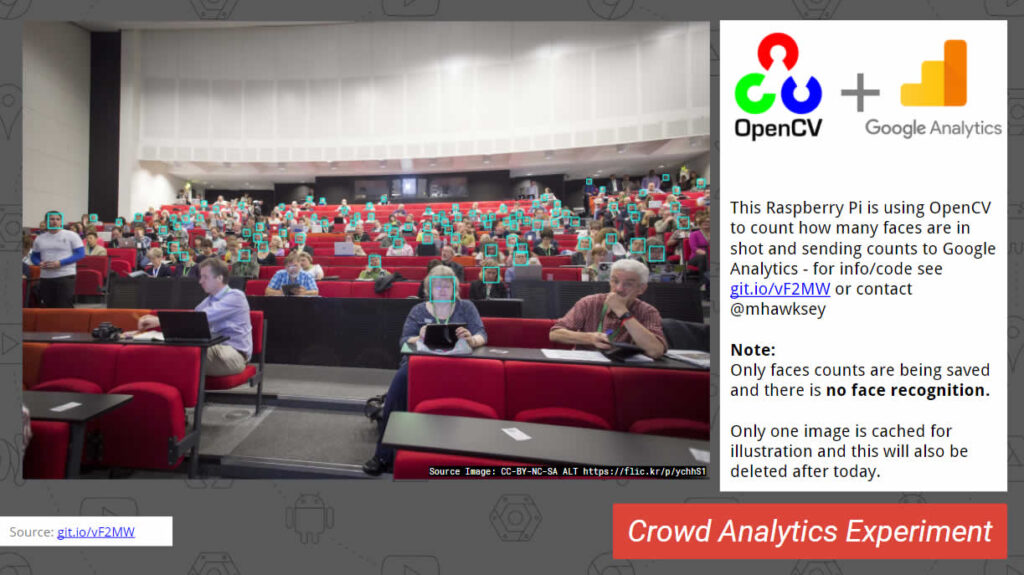

To demonstrate how easy it was to integrate Google Analytics I developed an example inspired by GA GDE Nico Miceli’s physical analytics experiments where he uses a Raspberry Pi and various sensors to record physical events around his home. An idea I wanted to try was using a Pi camera and face detection to measure audience density. There are various real world applications for this such as estates management and analysing rooms or corridor usage. Achieving this is incredibly easy thanks to the OpenCV (Open Source Computer Vision) library which includes face detection. Using Carlo Mascellani’s Face detection with Raspberry Pi instructions I was sending data to Google Analytics in under 15 minutes. If you’d like to run this yourself here is a gist embedded below:

import io

import picamera

import cv2

import numpy

def hitGA(faces):

print("Sending to GA")

requests.get("http://www.google-analytics.com/collect?v=1" \

+ "&tid=YOUR_UA_TRACKING_ID_HERE" \

+ "&cid=1111" \

+ "&t=event" \

+ "&ec=FaceDetection" \

+ "&ea=faces" \

+ "&el=DevFest17"

+ "&ev=" + faces).close

#Based on Face detection with Raspberry Pi

#For org. + setup http://rpihome.blogspot.co.uk/2015/03/face-detection-with-raspberry-pi.html

#Modified by mhawksey

while True:

#Create a memory stream so photos doesn't need to be saved in a file

stream = io.BytesIO()

#Here you can also specify other parameters (e.g.:rotate the image)

with picamera.PiCamera() as camera:

camera.resolution = (2592, 1944)

camera.iso = 800

camera.capture(stream, format='jpeg')

#Convert the picture into a numpy array

buff = numpy.fromstring(stream.getvalue(), dtype=numpy.uint8)

#Now creates an OpenCV image

image = cv2.imdecode(buff, 1)

#Load a cascade file for detecting faces

face_cascade = cv2.CascadeClassifier('/usr/share/opencv/haarcascades/haarcascade_frontalface_alt.xml')

#Convert to grayscale

gray = cv2.cvtColor(image,cv2.COLOR_BGR2GRAY)

#Look for faces in the image using the loaded cascade file

faces = face_cascade.detectMultiScale(gray, 1.1, 5)

print ("Found " + str(facesInt) + " face(s)")

#Send faces counted to GA

hitGA(str(len(faces))

#Draw a rectangle around every found face

for (x,y,w,h) in faces:

cv2.rectangle(image,(x,y),(x+w,y+h),(255,255,0),2)

#Show the result image

imS = cv2.resize(image, (640, 360))

cv2.imshow('frame', imS)

k = cv2.waitKey(1000)

Given I’d done no prior testing I was very pleased with the results and the setup was able to record and send data to Google Analytics every 60 seconds. By exporting the event data from Google Analytics with a minute index it is possible to graph the average event value:

Whilst face detection isn’t 100% accurate (there were a lot more than 40 people at my talk … honest) I’m hopeful others will find this recipe useful and can perhaps, improve and analyse how reliable it is.

Easter egg …. updating a Google Slides deck with an image from OpenCV (Raspberry PI)

For my presentation I tweaked this slightly so the the image with the most faces detected was automatically inserted into it. I could have used the Google Python Client library to do this but being a Google Apps Script kinda guy simply made a POST request from my PI to my Google Slides deck via the new Google Apps Script Slide Service. As posting images in this way might be useful here is some code to do this:

Google Slides (added to your Slides presentation via Tools > Script editor)

/**

* @OnlyCurrentDoc

*/

function doPost(e) {

try {

// decode base64 into bytes[]

var img_bytes = Utilities.base64Decode(e.postData.contents);

// make image blob

var img = Utilities.newBlob(img_bytes, e.postData.type, 'dump.jpg');

// get the bound presentation

var slides = SlidesApp.getActivePresentation().getSlides();

// loop through the slides

for (var i = 0; i < slides.length; i++){

// extract the speaker notes

var notes = slides[i].getNotesPage().getSpeakerNotesShape().getText().asString();

// look for a slide with the text {{IMAGE_REPLACE}}

if(notes.indexOf("{{IMAGE_REPLACE}}") == 0) {

// As 1st image in the slide replace with our image blob

slides[i].getImages()[0].replace(img);

// using a table element in the slide as text placeholder to update with date stamp

slides[i].getTables()[0].getCell(0, 0).getText().setText(new Date());

}

}

// send a message when done

return ContentService.createTextOutput(JSON.stringify({result:'ok'})).setMimeType(ContentService.MimeType.JSON);

} catch (e) {

return ContentService.createTextOutput(JSON.stringify(e)).setMimeType(ContentService.MimeType.JSON);

}

}

This script is published as a web app and the url included in the python code below:

Python (additions running on Raspberry PI)

import requests

import base64

maxFaces = -1

# Setup posting result to Slides

url = 'PUBLISHED_WEB_APP_URL_FROM_GOOGLE_APPS_SCRIPT'

# prepare headers for http request

content_type = 'image/jpeg'

headers = {'content-type': content_type}

The code above imports the requests and base64 libraries and sets some variables we’ll use later. If using this code you’ll need to include the url from Google Apps Script in the previous step.

Next in the while loop for the image processing if the camera shot contained the most faces detected so far the image is base64 encoded and sent as a POST request to the published web app url setup for our Slides deck.

facesInt = len(faces)

# Save the result image if new maximum

if facesInt > maxFaces:

retval, buffer = cv2.imencode('.jpg', image)

img_encoded = base64.b64encode(buffer)

response = requests.post(url, data=img_encoded, headers=headers)

maxFaces = facesInt

print (response.text)

The complete modified python code is in this gist.

I hope you find this experiment useful and another example of how Google Analytics can support lean actionable insight.